Why AI Makes Content Moderation Better, Not Worse

Building a political content labeler with AI — what actually works

It is the spring of 2017. I am sitting at my desk at Facebook, staring at a spreadsheet of ad disclaimers.

“Paid for by Mickey Mouse.” “A Concerned Citizen.” “None of Your Business.”

My job, in that moment, was to decide which of these were real political advertisers and which were trolls testing our new system. We were building Facebook’s political ad transparency tool from scratch, under enormous pressure, in the aftermath of Russian interference in the 2016 election. And one of the hardest problems we faced wasn’t the engineering, or the regulatory compliance, or even the identity verification.

It was this: what is a political ad?

That question haunted me for months. And it came back to me last week when Meta announced it’s using more advanced AI for content enforcement — and when I spent a few hours playing with a tool called Zentropi that made me realize just how far we’ve come.

That experience is why I read Meta’s AI moderation announcement last week very differently from most people.

1. The problem with defining “political”

In traditional campaign finance law, the definition starts with the entity. If you’re a candidate, a party, or a PAC, your ads need disclaimers. It’s clean, logical, and completely useless for what Facebook was trying to do.

The Russian ads from 2016 weren’t part of official campaigns. They came from shell pages — Blacktivist, Heart of Texas, Army of Jesus — running issue-based content about race, guns, immigration, and religion. Not election ads in any legal sense. But political in every other way.

So we had to flip the model. Instead of asking who is buying this ad, we had to ask what the ad is about. That meant defining “political” by content rather than by entity. And once you start there, you discover very quickly that almost anything can be politicized.

Our keyword classifier couldn’t distinguish between the former president and a bean company. For a while, Bush’s Baked Beans kept getting flagged as political advertising. It was funny in hindsight. It was not funny at the time.

We eventually launched the political ad library in time for the 2018 midterms. It was imperfect, clunky in places, and built on judgment calls that I personally agonized over — including sitting with a spreadsheet, Googling “Patriots for America,” and asking myself: legitimate group, or Russian troll farm?

2. What AI-powered policy building looks like now

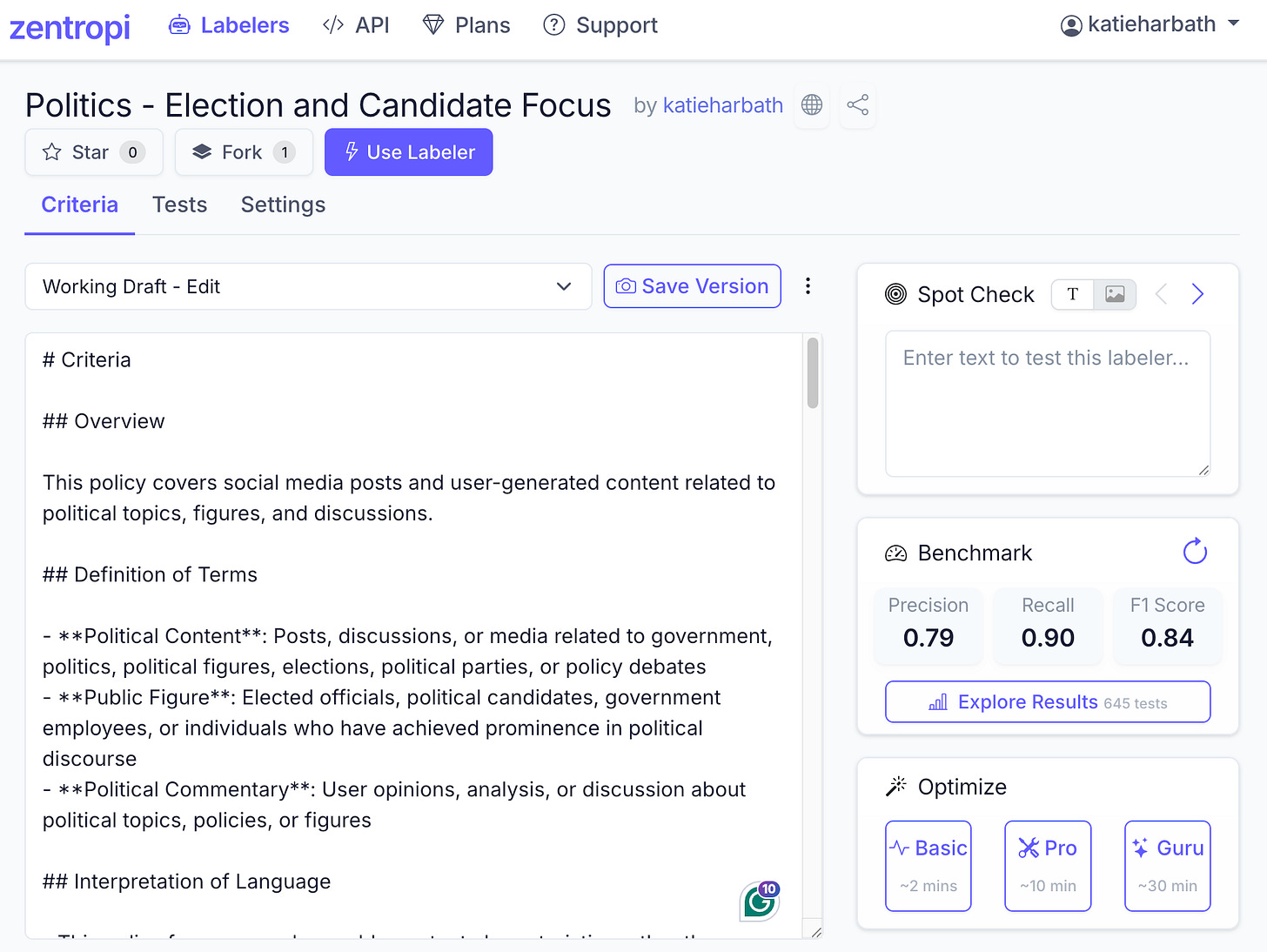

Last week, I spent time with Zentropi AI, a tool that lets you build intelligent text labelers — AI-powered content classifiers — by writing natural-language policies and training them on examples. I know the two founders from their time at Facebook. When they told me what they were building, I immediately thought of that spreadsheet.

I built two political content labelers: one narrow (elected officials, elections, and voting only) and one broader (issue-based content — essentially the version Facebook needed in 2017). The hardest lesson was about the data. I didn’t want to upload a list of every elected official in the United States. I needed the policy to recognize patterns — ways of talking about elected officials — rather than memorizing names. Getting that right required going back and forth between Zentropi and Claude to sharpen what I actually meant by “political,” round by round. The result is better than anything I could have built alone in that timeframe — but I want to be clear: it’s not perfect. Identifying whether a post is coming from a political figure is still genuinely hard, and this approach has edges I haven’t fully mapped yet. Consider it a strong starting point, not a solved problem.

What took us months of meetings, false positives, and spreadsheet gut checks in 2017 took me a few hours of work in 2026.

Screenshot of my Zentropi dashboard.

3. Why AI in content moderation is actually good

Meta’s announcement this week is worth reading carefully. They’re deploying more advanced AI systems across their apps to improve content enforcement — catching scams, impersonation, and other severe violations more accurately and at greater scale.

Some people will be skeptical. The story of content moderation AI is, after all, also a story of over-enforcement, cultural blind spots, and classifiers that couldn’t tell beans from presidents.

But the case for AI in this space is real and worth stating clearly.

Content moderation at scale has always required asking human beings to spend their days looking at the worst things on the internet. The mental health toll on those workers has been significant and well-documented. AI doesn’t eliminate human judgment from this loop — it reduces the volume of graphic content that humans have to process, and it handles the repetitive work that machines are genuinely better at.

It also makes policy updates far easier to deploy. Training a new rule into an AI system is fundamentally different from retraining tens of thousands of contractors who each carry slightly different mental models of what a policy means. The consistency alone is worth something.

My read: Meta’s basic framing here is right, even if their track record on follow-through is uneven. AI handles the volume. Humans make the calls that matter — account disablements, law enforcement referrals, the edge cases that require judgment no classifier has yet. The question isn’t whether to use AI for content moderation. It’s whether the policies being encoded are any good — and whether the systems are being tested rigorously for bias, cultural context, and adversarial manipulation.

That’s still a hard problem. But it’s a better problem than the one I was solving with a spreadsheet in 2017.

One last thing

If you work in tech policy and have never actually tried to write a content moderation policy — not describe one, not critique one, but build one — I’d encourage you to try Zentropi. They have open-source policies available, including the two political labelers I built, which you’re welcome to use as a starting point.

The exercise of sitting down and trying to define “political content” precisely enough that a machine can learn it from examples is humbling in exactly the right way. It will make you a better critic of the platforms you regulate or advise.

I have no financial relationship with Zentropi. I know the founders from Facebook, and I tried the tool because they asked me to. I’m writing about it because it sent me straight back to 2017 in the best possible way.

Resources

Editor’s note: This newsletter was developed in collaboration with Claude from Anthropic. My process: I provided a detailed brain dump of my experience building the political ad transparency tool at Facebook and my recent time with Zentropi. Claude drafted from those notes; I edited, shaped the framing, and verified all factual claims.

Is there a policy issue or position that isn't left coded or right coded. It seems like this would ban all issue related content. Not good for the health of democracy and free speech.